JustMental Statement | Meta/Instagram Teen Safety Alerts

Passing the Buck Isn’t Good Enough.

This week, Meta announced that Instagram will begin alerting parents when their teenagers repeatedly search for suicide and self-harm content.

On the surface, that sounds like progress. It isn’t. Not really.

Let’s be clear about what this actually is: a billion-dollar corporation, currently sitting in two separate courtrooms defending itself against allegations that it deliberately designed its platforms to addict and harm young people, announcing a feature that shifts responsibility onto parents.

Notify a parent after the fact? That’s not protecting young people.

That’s a PR move dressed up as a safeguard.

The question nobody at Meta seems to be answering is this, why is that content still there to search for in the first place?

If your platform’s own policy is to block searches related to suicide and self-harm, then what are teenagers repeatedly searching for that’s triggering these alerts? The harmful content is clearly still accessible enough to send vulnerable young people down a very dark road before a parent notification ever lands on their phone.

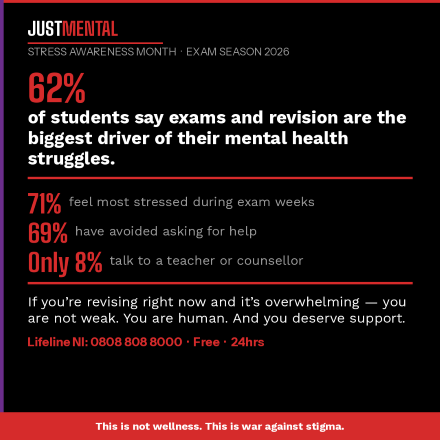

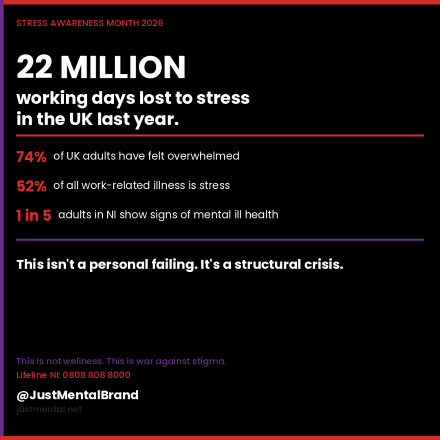

Mental health does not discriminate. It doesn’t care how old you are, where you’re from, or what your background is. But we know that when someone, teen or adult, is in crisis, they are at their most vulnerable.

And right now, social media platforms are profiting from that vulnerability while doing the bare minimum to address it.

At JustMental, we believe in radical honesty, because that’s what people in crisis deserve. So here it is: alerting parentsafter a teenager has repeatedly searched for methods of self-harm is not a safety net. It’s a receipt.

What we actually need from platforms like Instagram is threefold:

1. Aggressively pursue and remove those who create and distribute harmful content, not just block searches, but actively identify, report, and assist in the prosecution of those who produce content that glamourises suicide and self-harm.

2. Invest meaningfully in genuine mental health infrastructure, not resource links buried in a notification, but real, funded partnerships with mental health organisations that have actual clinical credibility.

3. Stop hiding behind opt-in parental supervision tools, the teens most at risk are often the least likely to have an engaged parent enrolled in a supervision programme. Build safety in by default, not by exception.

Meta’s announcement arrived the same week Mark Zuckerberg stood in a courtroom and maintained that the scientific evidence doesn’t prove social media causes mental health harm. You cannot simultaneously claim your platform is safe and announce emergency parental alert systems for suicide searches. Pick a lane.

We launched JustMental because we’re tired of institutions, corporate or otherwise, treating mental health as a box to tick. People are dying. In Northern Ireland alone, we carry the UK’s highest suicide rate and the weight of generational trauma that no notification to a parent’s WhatsApp is going to fix.

Do better, Meta. The people who need protecting deserve more than a press release.

JustMental — This is not wellness. This is war against stigma.